Hey gang, I’m wondering how you’d like me to proceed given the following.

The Plan

For context, plan is/was to first update FastMath to delegate to StrictMath wherever I changed it to delegate to Math, and update tests in Hipparchus and Orekit accordingly. From there we can determine whether results are consistent across different platforms.

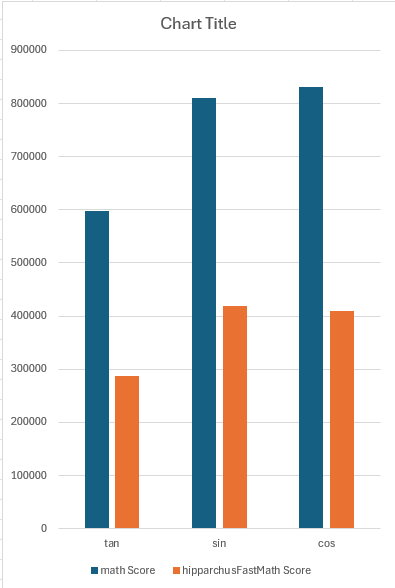

The next step is to replace some StrictMath calls with the custom implementations that were present before my first MR in cases where the custom implementations are faster than StrictMath (but slower than Math) (e.g., FastMath::sin, cos, and tan).

The Problem:

Most test breakages are tolerable, but I’ve hit a (potential) bump with StrictMath::exp:

FastMathTest::testExpSpecialCases is failing, and shows that

StrictMath.exp(1.0) == StrictMath.nextUp(Math.E)

rather than

StrictMath.exp(1.0) == Math.E

The difference in double precision: 4 * machEps == 4 * Precision.EPSILON

The difference between the long bits: 1L

(notably, Math.exp(1.0) == Math.E exactly)

No matter how I look at it, this seems like a problem, esp. when we look at the actual values next to a reference (WolframAlpha’s e):

Math.E : 2.718281828459045

StrictMath.exp(1): 2.7182818284590455

WolframAlpha e : 2.7182818284590452353...

StrictMath itself delegates to FdLibm.Exp, which says the following:

* Special cases:

* exp(INF) is INF, exp(NaN) is NaN;

* exp(-INF) is 0, and

* for finite argument, only exp(0)=1 is exact.

*

* Accuracy:

* according to an error analysis, the error is always less than

* 1 ulp (unit in the last place).

So we shouldn’t expect it to be exact but, unless I’m misunderstanding, this is more that one ULP of error, right?

Do you (the Orekit and Hipparchus developers) want to go ahead with the initial StrictMath delegation anyway? Should I instead swap to the custom implementation?

![]()