To be clear, I’m happy to see it as a minor version myself! Makes approvals much easier ![]()

Hi folks,

I’ve upping the thread, because I have some seconds thoughts on this.

As @afvy pointed out, there are test failures (both on Hipparchus and Orekit develop branches, see issues 413 and 1794) with JDK8 on Apple machines. This is due to the delegations to Java-native Math in place of the in-house FastMath for “intrinsic” functions (exp, log, …). It’s about 150 failures, and they exceed 200 with Java >= 17. In my understanding, this is because (unlike StrictMath, or FasthMath I guess), Math does not garantee bit-for-bit results. Something I didn’t fully appreciate at the start of this discussion.

Let’s be honest, part of the problem is probably that some tests are poorly designed, and we shouldn’t do assertions like that.

Beyond that, the fact is that now, people using Orekit on Windows/Linux versus Apple will have different results. I think this problem has many undesirable repercussions:

- It is going to be impossible to reproduce results accross platforms

- It will deter contributors on Apple, because their local tests will give different results than the Gitlab pipeline

So whilst I do appreciate performance improvements, I’m not so sure about delegating more to Math anymore. Opinions?

Note: differences can also appear on a given platform (Windows or Linux), when switching between JDK. For example at the moment on Hipparchus, some test tolerances are set to pass in 11 (used by the pipeline), whilst they do not work with 8 (which is the actual version for the library). This is however a different problem, as we don’t upgrade Java often

PS: if you guys prefer, we can have this conversation in a dedicated thread, since it’s not just about library comparisions and Hipparchus

Cheers,

Romain.

I agree with this analysis, and I confirm reproducibility was one of the three design goals I explained earlier in this thread. I am however surprised that it is still a problem, after all IEEE754 is a really good standard, it is well adopted by processors manufacturers and by library developers, so I would expect consistency to be almost granted. I was clearly wrong and this goal is still important, and for us really mandatory.

So yes, perhaps we should roll back this change unfortunately.

I wonder whether delegating to StrictMath would resolve the inconsistencies. Obviously the performance improvements wouldn’t be as significant, but it would resolve the need to maintain so much of FastMath.

Have specific functions been pinned down as the culprits? That would be some excellent data to have!

It would. I’m getting a bit lost in your analysis, would delegating to StrictMath still be performance improvement overall (versus FastMath), or would it be more or less the same ? Or worse?

Not yet, we would need to debug or do some sort of trial and error. But we need two OS with the different behaviours

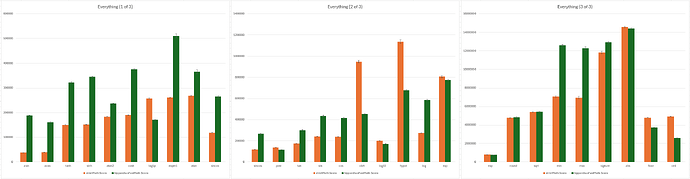

The following is representative of results at large (this is in the original doc., I’ve just disabled the Apache and Math columns):

Higher score → faster, so wherever the orange column is taller is where StrictMath is faster than Hipparchus’s FastMath; e.g., the trig. functions are all faster using the OG FastMath impls. rather than StrictMath impls. I’d say exp and abs are pretty borderline.

The trig. functions in particular are rather unfortunate, as the Math versions are much faster, possibly due to the H. FastMath versions creating new objects on each call (this is what spurred this investigation originally).

Is the Math implementation of these intrinsic functions hardware optimized?

I tried to spin up a VM with Alpine Linux ARM as guest OS using QEMU on my Mac, and I get exactly the same failures as on the host system macOS.

I wonder if these inconsistencies are more widespread, and due to the differences between x86 and ARM architectures.

Cheers,

Alberto

Ok so I don’t know yet what changed, but now on Windows, with Java 8, l get 124 failed tests on the develop branch of Orekit. I’m pretty sure it wasn’t the case a few days ago. I’m a bit puzzled

Edit: I got it. The tests pass locally in Java 21 and 11 (the latter is used by gitlab). They fail in Java 8. This is the same problem than we have on Hipparchus currently

I have the same issue

All the more reason for the J21 bump!

It’s unfortunate how much pain the switch has caused.

In the failing tests, is it a matter of very tight tolerances and small changes? Or are we seeing changes that can’t be “ignored”?

Hi Ryan,

These are the errors I obtain for the tests in Hipparchus that check the accuracy of the intrinsic functions in FastMath that have been delegated to Math.

Running on macOS ARM with Java 21.

[ERROR] FastMathTest.testCosAccuracy:884 cos() had errors in excess of 0.51 ULP ==> 0.8942842777473787 ULP

[ERROR] FastMathTest.testExpAccuracy:770 exp() had errors in excess of 0.51 ULP ==> 0.7453450516683522 ULP

[ERROR] FastMathTest.testLog10Accuracy:351 log10() had errors in excess of 0.51 ULP ==> 0.6020764780479343 ULP

[ERROR] FastMathTest.testPowAccuracy:743 pow() had errors in excess of 0.51 ULP ==> 0.7404332752787235 ULP

[ERROR] FastMathTest.testSinAccuracy:855 sin() had errors in excess of 0.51 ULP ==> 0.974363017553929 ULP

[ERROR] FastMathTest.testTanAccuracy:913 tan() had errors in excess of 0.51 ULP ==> 0.6887097435600763 ULP

Cheers,

Alberto

You are right! Could however someone check if this also happens with Java 17 as Hipparchus will only move forward to Java 17, so it remains compatible with Android.

For me with Java 17 is the same as Java 21.

Hey gang, it sounds like maybe there’s more than one factor at play.

There are the FastMath changes coupled with different Java versions coupled with different OSes.

But, @Serrof , it sounded like you were seeing changes that seemed unrelated. Is that the case?

I can spend some time this weekend (2025-09-05 - 2025-09-07) trying to track things down.

I’ll set things up s.t. I can test systematically the different combinations; i.e.,

{ Java 8, Java 11, Java 17, Java 21 } x { Windows 11, macOS Sequoia }

I’ll include a Linux OS if I can set up my personal machine to dual-boot without much trouble.

Sorry about the delay guys. This is on my TODO, but finding time has been tough. I’ll figure it out soon enough, but I think as an interim fix I’d just delegate to StrictMath wherever there are (new) Math delegates.

No worries. Yeah maybe we could use StrictMath there at least temporarily

Delegating to StrictMath instead of Math does NOT solve the issue with failing tests on my end (macOS Tahoe, JDK 21).

Hi Alberto,

thanks for reporting this, it is definitely worrying and needs investigation.

Cheers,

Romain.

Hi Ryan,

Have you had time to investigate further?

If not, could you maybe open the MR to use StrictMath instead?

Cheers,

Romain.